Recommender Engineering

Software and Experiments on Recommender Systems

September 12, 2014

Copyright © 2014 Michael Ekstrand. All rights reserved.

#1tweetresearch

Different recommender algorithms have different behaviors. I try to figure out what those are.

… in relation to user needs

… so we can design effective recommenders

… because I'm curious

Broader Goal

To help people find things.

- human-computer interaction

- information retrieval

- machine learning

- artificial intelligence

- recommender systems

Recommender Systems

Recommender Systems

Recommender Systems

recommending items to users

Recommender Approaches

Many algorithms:

- Content-based filtering

- Neighbor-based collaborative filtering [Resnick et al., 1994; Sarwar et al., 2001]

- Matrix factorization [Deerwester et al., 1990; Sarwar et al., 2000; Chen et al., 2012]

- Neural networks [Jennings & Higuchi, 1993; Salakhutidinov et al., 2007]

- Graph search [Aggarwal et al., 1999; McNee et al., 2002]

- Hybrids [Burke, 2002]

Key Question

Does recommendation work?

Does this recommender work?

What recommender works ‘better’?

how?

why?

when?

Research Overview

I try to answer these questions with several tools:

LensKit software helps me (and others) experiment with different recommendation approaches

Offline experiments study what recommender algorithms do on existing data sets

User studies examine how users respond to recommendations

all from a human-computer interaction perspective

An open-source toolkit for building, researching, and learning about recommender systems.

LensKit Features

- build

- APIs for recommendation tasks

- implementations of common algorithms

- integrates with databases, web apps

- research

- measure recommender behavior on many data sets

- drive user studies and other advanced experiments

- flexible, reconfigurable algorithms

- learn

- open-source code

- study production-grade implementations

LensKit Project

- Open-source, developed in public on GitHub

- Java-based with modern patterns and libraries

- 45K lines of code

- Running production systems (MovieLens, Confer)

- Used for several published papers

LensKit Algorithms

- Simple means (global, user, item, user-item)

- User-user CF

- Item-item CF

- Biased matrix factorization (FunkSVD)

- Slope-One

- OrdRec (wrapper, needs testing)

Algorithm Architecture

Principle: build algorithms from reusable, reconfigurable components.

Benefits

- Reproduce many configurations

- Try new ideas by replacing one piece

- Reuse pieces in new algorithms

Grapht

Java-based dependency injector to configure and manipulate algorithms.

Algorithms are first-class objects

- Detect shared components (pre-build models, re-use parts in evaluation)

- Draw diagrams

Context-sensitive policy: components can be arbitrarily composed

Extensive defaulting capabilities

Offline Experiments

Take data set

- MovieLens ratings

- Yahoo! Music ratings

- Echo Nest play counts (in progress)

- ACM Digital Library citations

and see what the recommender does

Use previously-collected data to estimate recommender's usefulness.

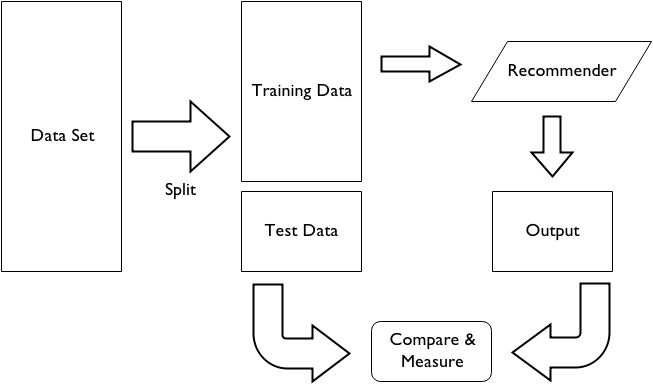

Offline Architecture

Compare and Measure

How accurately are ratings predicted?

- very commonly studied

- useful for tuning performance

Can the recommender find things the user bought?

- increasingly common question

- very difficult to do well

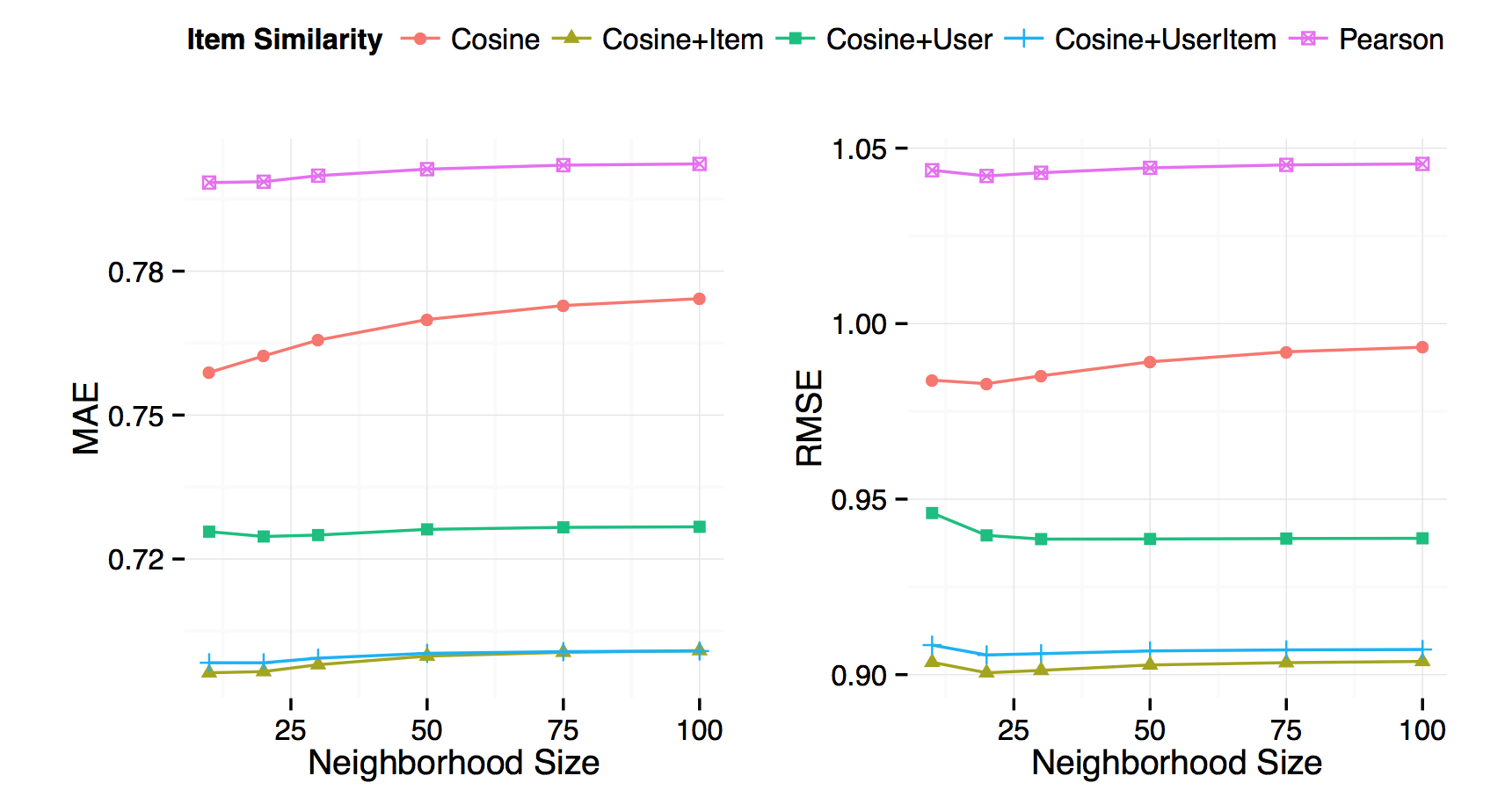

Tuning Algorithms

- Different algorithms

- Algorithms have different parameters

- How to pick?

Example Output

Testing different variants.

Tuning Research

Previous work:

- explore tunings of different algorithms

- develop strategies for picking them

Future work:

- AutoTune the recommender

- Explore impact of different metrics

New Ways of Measuring

Need new ways of measuring recommender behavior:

- new correctness measures

- replay over time

measure additional properties

- diversity

- novelty

- ‘serendipity’

- stability

When Recommenders Fail

Short paper, RecSys 2012; ML-10M data

Counting mispredictions (|p − r| > 0.5) gives different picture than prediction error.

Consider per-user fraction correct and RMSE:

- Correlation is 0.41

- Agreement on best algorithm: 32.1%

- Rank-consistent for overall performance

Also:

- Different algorithms make different mistakes

- Different users have different best algorithms

Marginal Correct Predictions

Q1: Which algorithm has most successes (ϵ ≤ 0.5)?

Qn + 1: Which has most successes where 1…n failed?

| Algorithm | # Good | %Good | Cum. % Good |

|---|---|---|---|

| ItemItem | 859,600 | 53.0 | 53.0 |

| UserUser | 131,356 | 8.1 | 61.1 |

| Lucene | 69,375 | 4.3 | 65.4 |

| FunkSVD | 44,960 | 2.8 | 68.2 |

| Mean | 16,470 | 1.0 | 69.2 |

| Unexplained | 498,850 | 30.8 | 100.0 |

Future Work

- Building new methods & metrics in LensKit

- Running experiments to quantify behavior

- Calibrate metrics against real user measures

User-Based Research

Offline has problems:

- does methodology really test user experience?

- do metrics matter?

- are there other things users care about?

Answers: sort-of, maybe, and yes.

User Study

Goal: identify user-perceptible differences.

- RQ1

- How do user-perceptible differences affect choice of algorithm?

- RQ2

- What differences do users perceive between algorithms?

- RQ3

- How do objective metrics relate to subjective perceptions?

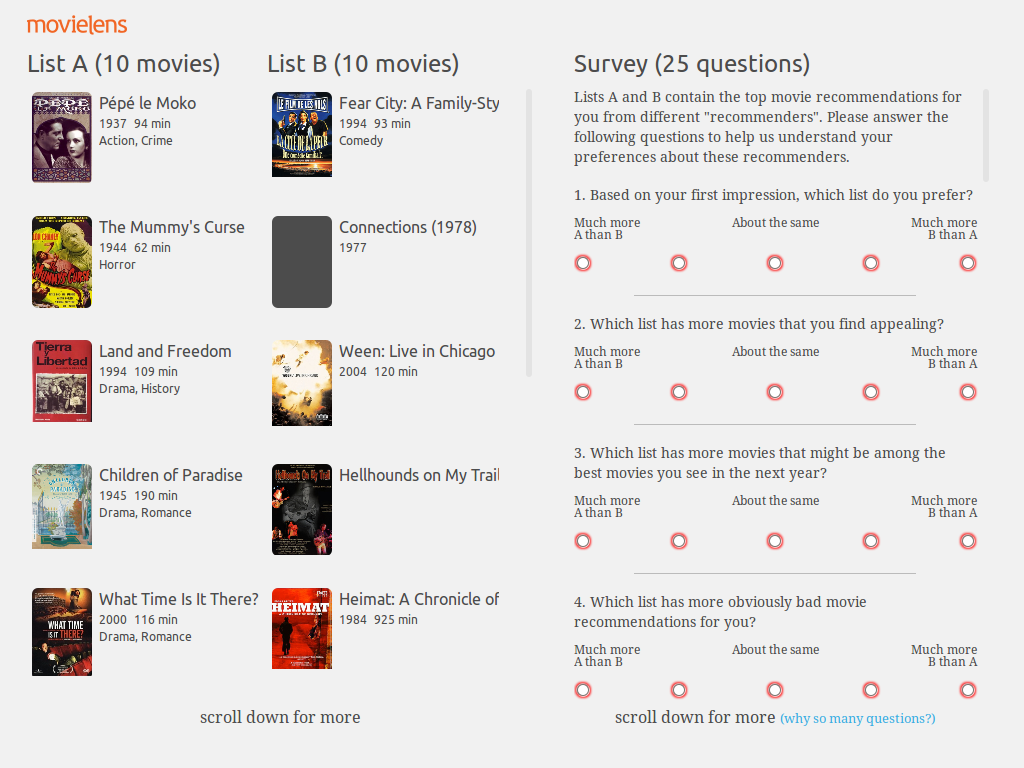

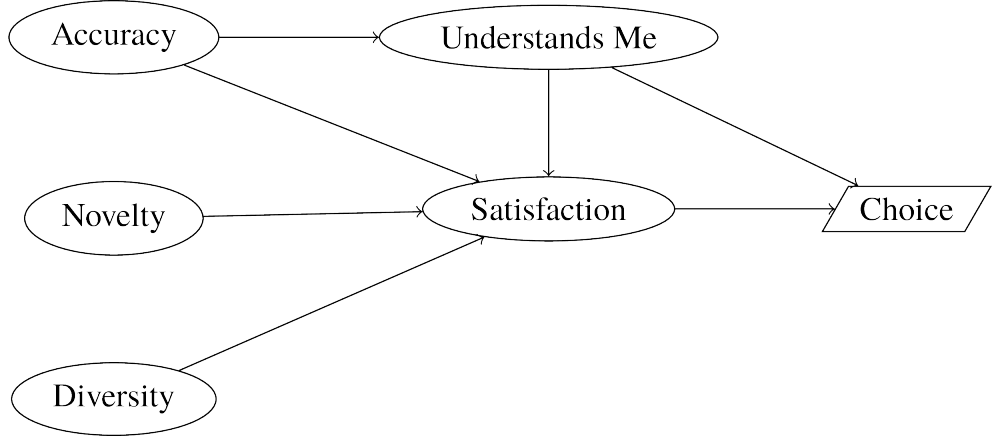

Context: MovieLens

- Movie recommendation service & community

- 2500–3000 unique users/month

- Extensive tagging features

- Launching new version this summer

- Experiment deployed as intro to beta access

Algorithms

Three well-known algorithms for recommendation:

- User-user CF

- Item-item CF

- Biased matrix factorization (FunkSVD)

- All restricted to 2500 most popular movies

Each user assigned 2 algorithms

Survey Design

Initial ‘which do you like better?’

22 questions

- ‘Which list has more movies that you find appealing?’

- ‘much more A than B’ to ‘much more B than A’

- Target 5 concepts

Final ‘which do you like better?’

Designed in collaboration w/ decision psychologist

Example Questions

- Diversity

- Which list has a more varied selection of movies?

- Satisfaction

- Which recommender would better help you find movies to watch?

- Novelty

- Which list has more movies you do not expect?

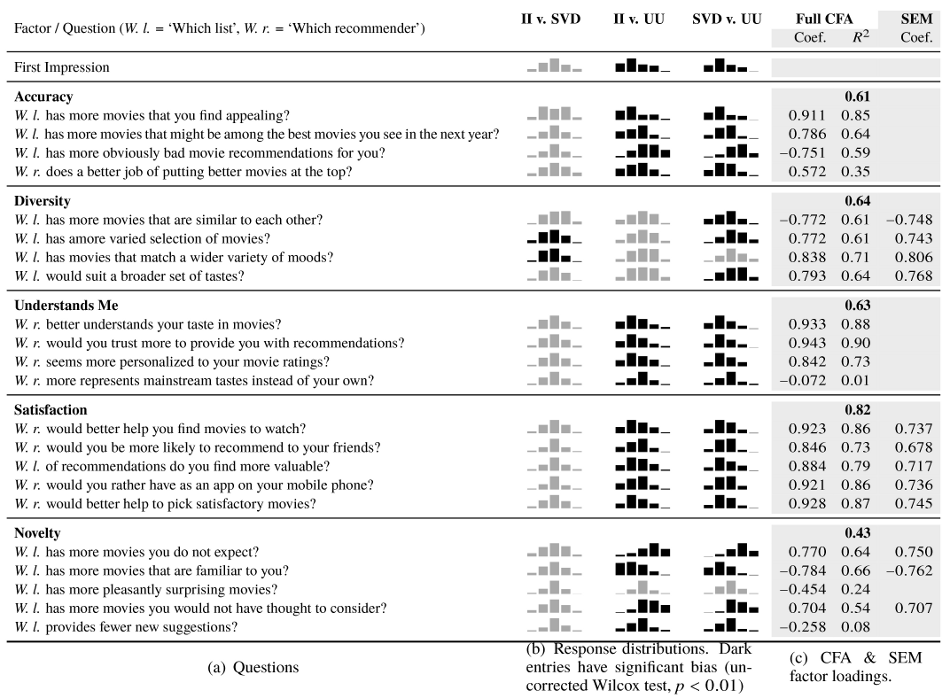

Analysis features

- joint evaluation

- users compare 2 lists

- judgment-making different from separate eval

- enables more subtle distinctions

- hard to interpret

- factor analysis

- 22 questions measure 5 factors

- more robust than single questions

- structural equation model tests relationships

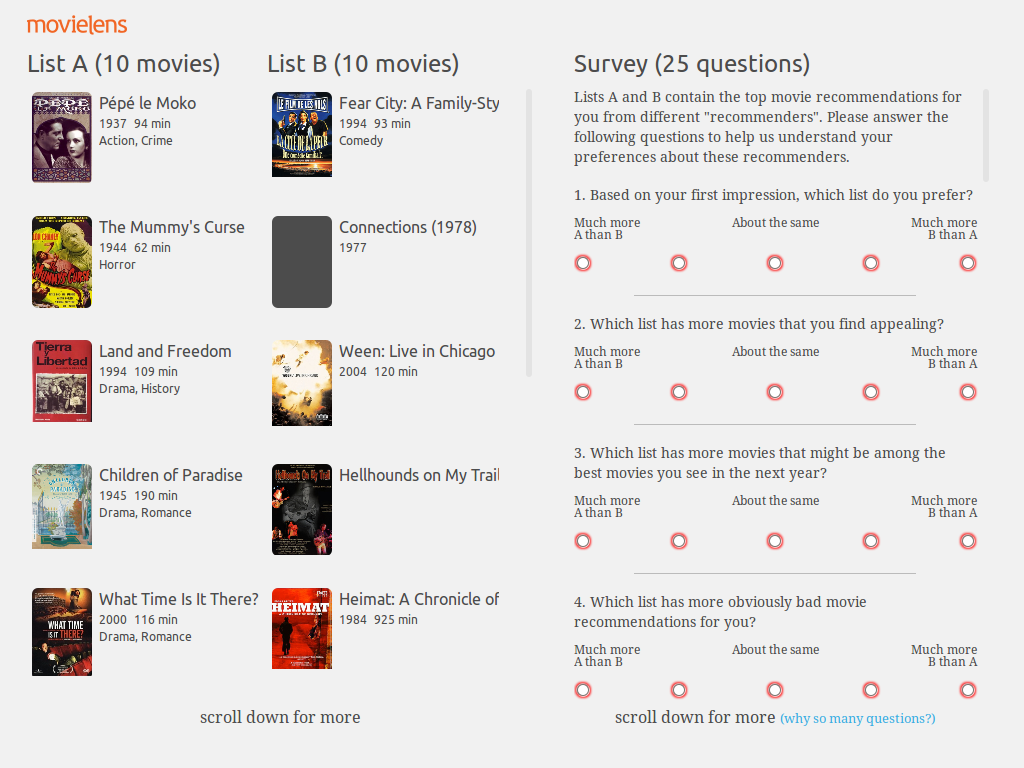

Hypothesized Model

Response Summary

582 users completed

| Condition (A v. B) | N | Pick A | Pick B | % Pick B |

|---|---|---|---|---|

| I-I v. U-U | 201 | 144 | 57 | 28.4% |

| I-I v. SVD | 198 | 101 | 97 | 49.0% |

| SVD v. U-U | 183 | 136 | 47 | 25.7% |

bold is significant (p < 0.001, H0 : b/n = 0.5)

Question Responses

Measurement Model

- Multi-level linear model

- Direction comes from theory

- Condition variables omitted for simplicity

Differences from Hypothesis

- No Accuracy, Understands Me

- Edge from Novelty to Diversity

Summary

Novelty has complex, largely negative effect

- Exact use case likely matters

- Complements McNee's notion of trust-building

Diversity is important, mildly influenced by novelty.

- Tag genome measures perceptible diversity best, but advantage is small.

User-user loses (likely due to obscure recommendations), but users are split on item-item vs. SVD

Consistent responses, reanalysis, and objective metrics

Results and Expectations

Commonly-held offline beliefs:

- Novelty is good

- Diversity and accuracy trade off

Perceptual results (here and elsewhere):

- Novelty is complex

- Diversity and accuracy both achievable

What We've Done

Collected user feeedback

that validates some results

and challenges others

To do: validate more metrics

What You Can Do

Software development

- well-established Java code base

- advanced object-oriented programming

Develop and run experiments w/ data

Hopefully: develop & analyze user experiments

Collaborate

- Other HCI, ML, recsys researchers

- Psychology

Requirements

Programming

- In Java (or related language like C#) preferred

- Can cross-train from C++, Python, Ruby

I can teach you the machine learning, recommender systems, and HCI

At this point, anyone working with me will be involved in LensKit development.

good technical writing skills a plus

Background Reading

If you want to do some reading in prep:

- Effective Java, 2nd Ed. (Bloch)

- Collaborative Filtering Recommender Systems

- LensKit documentation and videos

- Coursera course videos

Things We'll be Working On

- new temporal evaluator for LensKit

- new metrics and algorithms

- experiments to map metrics to user study data

- building new user studies

How to Get Involved

e-mail me at

learn-by-doing

- work on task

- submit code for review

- iterate until ready

- merge!